Defending AI Agents in Real Time: CISO Tactics & Risk Outlook

Executive Summary

The transition from AI at rest to AI in motion is reshaping the enterprise security perimeter in real time. While AI agents enhance productivity and automate decision-making, they also introduce dynamic attack surfaces, unpredictable behavior, and new tiers of supply chain exposure. This threat intelligence report explores emerging risks linked to autonomous and semi-autonomous AI agents, urging CISOs to reevaluate how runtime decisions can be continuously monitored, governed, and secured.

What Happened

In a recent post, Microsoft’s Defender Security Research Team spotlighted a significant shift in how cybersecurity strategies must evolve to adapt to real-time AI-driven workloads. Instead of static systems, AI agents now act and interact continuously—processing inputs, invoking APIs, and influencing decisions autonomously.

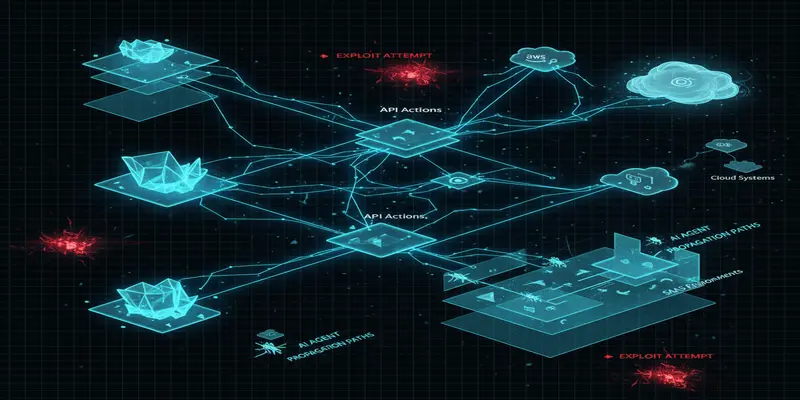

The report outlines new risk categories introduced by agentic AI models, including their capacity to make independent decisions across systems, reliance on live data and third-party APIs, and vulnerability to prompt injection or adversarial manipulation at runtime. Microsoft emphasizes the importance of building real-time defense mechanisms that monitor agent behavior post-deployment, not just during development or fine-tuning.

Why This Matters for CISOs

Most enterprise AI governance frameworks emphasize model accuracy, pre-deployment security checks, and infrastructure hardening. However, with software agents making real-time calls, interacting with SaaS platforms, and executing multi-step workflows, runtime trust boundaries become blurred.

For CISOs, the implications are clear: autonomous AI introduces a fluid threat surface that cannot be statically modeled. Traditional AppSec and MLOps paradigms overlook behavioral anomalies or abuse occurring after launch. The scale and unpredictability of these agents—even if correctly trained—escalate risk especially in cloud ecosystems where AI agents operate across multiple APIs and data layers.

This shift demands a renewed focus on cloud security threats and continuous monitoring of AI decision trees across runtime environments.

Threat & Risk Analysis

The introduction of agentic AI tooling—such as orchestrating assistants, document analyzers, or task runners—creates new vectors beyond conventional model exploits:

- Attack Vectors: Malicious prompt injection, misuse of external APIs, impersonation of internal systems, or unauthorized data calls via recursive tasks.

- Exposure Scenarios: Implicit authority granted to AI agents can lead to over-extension of privileges; agents can chain requests to exfiltrate, manipulate, or sabotage processes.

- Supply Chain Relevance: AI agents typically depend on APIs, third-party LLM plug-ins, and SaaS services. Each integration expands the risk posture to downstream providers.

- Attacker Motivations: From reconnaissance to full-system manipulations, adversaries may exploit gaps in agent logic to pivot, persist, or interfere in business logic through non-traditional TTPs.

- Enterprise Impact: AI agents embedded in operations (HR, finance, engineering workflows) with decision-making powers may cause self-driven policy violations, data leakage, or regulatory exposure.

Security teams must realign their detection telemetry for behavioral monitoring beyond static scanning. Real-time visibility is essential—not only into data access, but into intent modeled at execution.

For broader context, see our daily cyber threat briefings to stay updated on emerging vectors introduced by new AI integrations.

MITRE ATT&CK Mapping

-

T1556.001 — Credentials from Password Stores

AI agents may unintentionally access credential-storing APIs and expose secrets during task execution. -

T1203 — Exploitation for Client Execution

Malicious inputs can manipulate AI agent behavior to trigger execution of unauthorized processes. -

T1566.002 — SPEARPHISH via Service

Manipulated agents might act as conduits for malicious communications disguised as trusted automation. -

T1555 — Credentials from Password Stores

Some agents auto-call scripts or environments embedded with secrets; misuse leads to exposure. -

T1609 — Container Administration Command

AI agents deployed via containers can execute privilege escalation through misconfigured runtime dependencies. -

T1090.003 — Multi-Hop Proxy

Agents may be used to tunnel command-and-control pathways if input validation is bypassed.

Key Implications for Enterprise Security

- Traditional pre-deployment assessments fail to catch runtime decision risks

- AI agents blur the line between data processors and actors—this changes identity governance

- Security teams need observability across API interactions and continuous behavior profiling

- Zero trust assumptions must extend beyond users and apps—to automated logic chains

- Cross-platform auditor controls should include LLM-driven agents and external plugin behaviors

Recommended Defenses & Actions

Immediate (0–24h)

- Identify and categorize all deployed AI agents with autonomous functions

- Audit agent permissions to ensure least privilege across APIs and data scopes

- Disable recursive task execution unless explicitly approved and monitored

Short Term (1–7 days)

- Integrate runtime agent telemetry into SIEM/XDR tools for behavior monitoring

- Partner with DevOps/MLOps to operationalize defense-in-depth for AI dependencies

- Conduct red-teaming exercises simulating prompt injection and external manipulations

Strategic (30 days)

- Define enterprise-wide policy for AI responsibility chains (who owns agent actions?)

- Build internal guidelines for third-party AI plugin risk scoring

- Institute a formal AI runtime security governance framework within InfoSec GRC

Conclusion

The enterprise perimeter is no longer defined solely by human users or predefined endpoints. AI agents capable of acting independently at runtime represent a new operational paradigm—one where decisions are made, actions taken, and data moved beneath the surface of traditional controls. This cybersecurity report underscores the urgency for CISOs to recalibrate oversight mechanisms to protect against invisible, fast-evolving threats introduced by autonomous AI systems.

Start Your 14-Day Free Trial

Get curated cyber intelligence delivered to your inbox every morning at 6 AM. No credit card required.

Get Started Free