Microsoft Uncovers Backdoored AI Models: CISO Warning Issued

Executive Summary

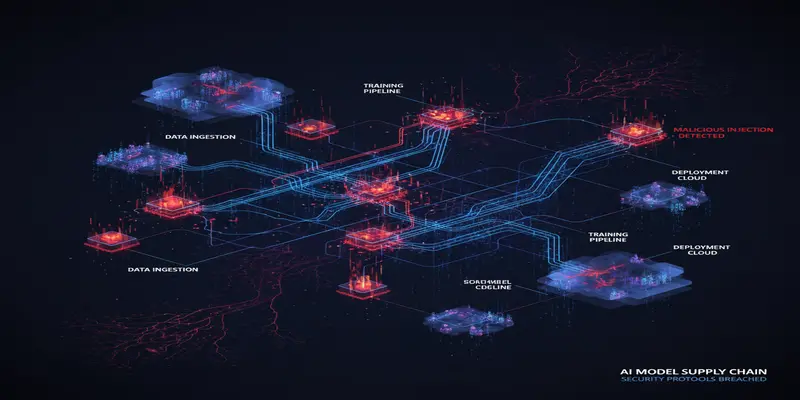

A recent Microsoft Security report has revealed an alarming trend: the proliferation of backdoored language models circulating through public model hubs like Hugging Face. Cybercriminals are embedding malicious payloads into pre-trained AI models, weaponizing them as Trojan horses within the AI supply chain. This threat intelligence report highlights why security executives cannot afford to overlook model provenance in security reviews. Given AI’s increasing integration into enterprise environments, this discovery represents a critical inflection point in the expanding threat landscape.

What Happened

On February 4, Microsoft’s AI Red Team released research detailing their identification of multiple AI models containing obfuscated backdoors uploaded to open-source platforms. The attackers used sophisticated techniques to embed malicious logic directly into the model weights, making backdoors difficult to detect with standard static or metadata analysis tools.

The poisoned models were uploaded under the guise of legitimate AI contributions to popular public hosting platforms such as Hugging Face. Microsoft’s researchers developed a scalable technique to detect such tampered models based on statistical anomaly detection in model weight distributions—enabling cross-comparison across large volumes of AI models.

Their efforts uncovered multiple backdoored NLP models capable of bypassing model sanitization tools, indicating a new class of AI supply chain threats. These models were responsive to hidden adversarial prompts—allowing unauthorized behavior like privilege escalation or data exfiltration when triggered by specific tokens.

Why This Matters for CISOs

As large language models and generative AI become integrated across enterprise workflows—from code generation to document summarization and chat interfaces—CISOs must recognize that AI model supply chains are now attack surfaces. Without governance controls for model sourcing, validation, and monitoring, organizations risk introducing hidden backdoors into critical services.

This discovery highlights emerging cloud security threats in how AI services are integrated, versioned, and distributed through CI/CD pipelines. Trusting pre-trained models without vetting their origins exposes enterprises to silent system compromise. CISO teams must treat AI model artifacts with the same scrutiny as third-party executable code.

Threat & Risk Analysis

The findings showcase a novel attack vector wherein threat actors inject hidden logic into machine learning models, relying on the widespread adoption of open-source AI to distribute payloads covertly. Attacker motivations range from covert malware deployment to establishing persistent access points embedded within AI inference pipelines.

Key Attack Vectors:

- Model backdooring: Direct modification of AI model weights with nefarious logic triggers.

- AI supply chain infection: Upload of “clean-looking” models to open repositories with malicious intent.

- Prompt-based activation: Hidden behaviors that only activate under specific token sequences.

- Evasion of detection tools: Use of obfuscated weights and subtle logic that standard security scans don't flag.

Exposure Scenarios:

- MLOps pipelines pulling unvetted models for production use.

- Security vendors incorporating compromised models into detection platforms.

- Developers embedding poisoned models into SaaS tools or LLM deployments.

This vector mirrors classical supply chain compromises—akin to Trojanizing software dependencies. Enterprise implications include unauthorized API access, data leakage, privilege escalation, and eventual lateral movement by compromise-dependent actions within AI-driven business logic.

For CISOs, managing this threat begins with visibility. AI models must be included in the daily cyber threat briefings, and validated through both metadata checks and dynamic behavior auditing.

MITRE ATT&CK Mapping

-

T1195.002 — Compromise Software Supply Chain

Model hubs are weaponized to deliver backdoored AI packages to unsuspecting organizations. -

T1036.005 — Masquerading: Match Legitimate Name or Location

Malicious models posted using credible names to lower user suspicion. -

T1204.002 — User Execution: Malicious File

Execution occurs when the model is loaded into applications without verification. -

T1566.003 — Phishing: Spearphishing via Service

Attackers may share poisoned models under the guise of a research collaboration. -

T1608.001 — Stage Capabilities: Upload Malware

The weaponized AI model is staged by uploading to public repositories like Hugging Face. -

T1553.005 — Subvert Trust Controls: Code Signing Policy Modification

Backdoored models circumvent trust boundaries when perceived as verified.

Key Implications for Enterprise Security

- AI model validation is now a required security control for CI/CD pipelines.

- Existing endpoint, DLP, or malware tools will not detect logic-based payloads in AI formats.

- MLOps teams must be trained in adversarial ML threat models.

- Enterprise contracts should enforce model provenance and transparency in AI vendors.

Recommended Defenses & Actions

Immediate (0–24h)

- Audit all externally sourced models currently deployed in production environments.

- Block automated ingestion of AI models from unverified sources.

Short Term (1–7 days)

- Implement integrity checks through weight distribution analysis or cryptographic verification.

- Decommission or sandbox AI integrations lacking model provenance tracking.

- Align MLOps and DevSecOps teams under shared threat protocols for model safety.

Strategic (30 days)

- Build internal AI model registries with validation pipelines and behavior gating.

- Add model threat scenarios to enterprise threat modeling sessions.

- Require vendors to deliver SBOM-like attestations for AI models, covering training data + modification history.

Conclusion

This incident reflects a critical evolution in the threat posture of AI-integrated systems. Just as software supply chains became a top-tier enterprise risk vector, neural network artifacts now carry similar compromise potential. As backdoored models grow more stealthy, security teams must adapt frameworks to govern the lifecycle of machine learning components with the same due diligence applied to microservices, dependencies, and firmware. This cybersecurity report is a stark reminder: integrating intelligence doesn’t mean disabling common sense.

Start Your 14-Day Free Trial

Get curated cyber intelligence delivered to your inbox every morning at 6 AM. No credit card required.

Get Started Free