Microsoft Uncovers One-Prompt LLM Safety Alignment Attack

A new Microsoft study reveals how a single adversarial prompt can fully bypass the safety alignment of large language models. CISOs must assess AI risk posture.

Artificial intelligence systems introduce entirely new attack surfaces. This section analyzes AI security risks including prompt injection attacks, model poisoning, adversarial machine learning, and vulnerabilities in large language models.

22 articles

A new Microsoft study reveals how a single adversarial prompt can fully bypass the safety alignment of large language models. CISOs must assess AI risk posture.

The Clawdbot agent is gaining popularity for automating tasks, but hides significant cyber risk. CISOs must assess the implications of exposing systems to unsecured AI.

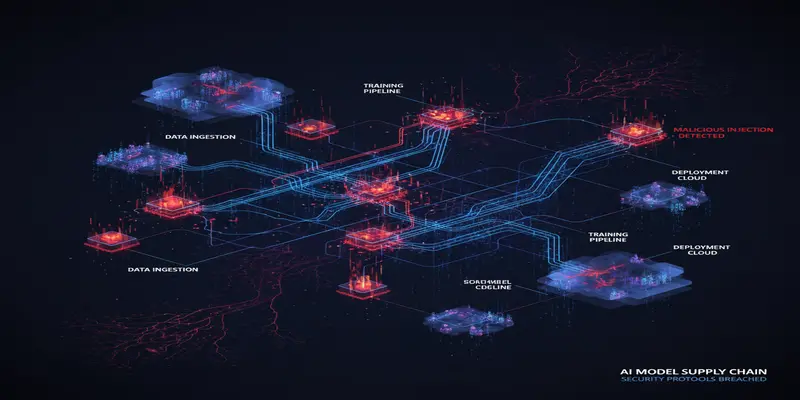

Microsoft researchers uncovered attacker-manipulated AI models with embedded backdoors, posing serious supply chain risks. CISOs must act now.

AI is driving a seismic shift in the 2026 cyber threat landscape. This threat intelligence report outlines critical risks, attacker methods, and mitigation paths.

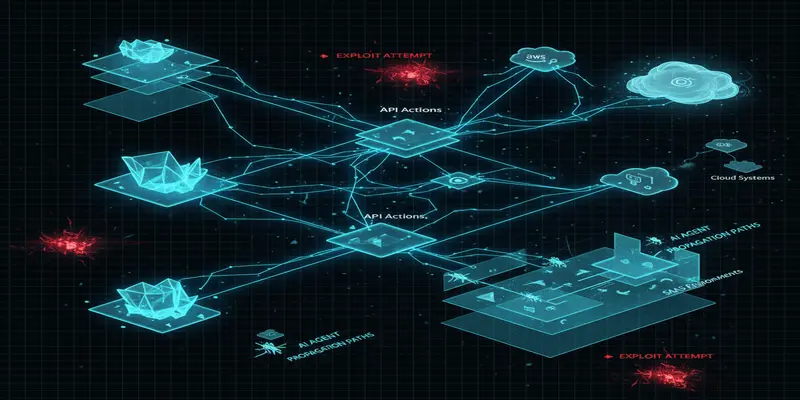

AI agents are creating dynamic new risks across enterprise environments. Microsoft’s latest insights highlight where CISOs must adapt defenses for real-time protection.

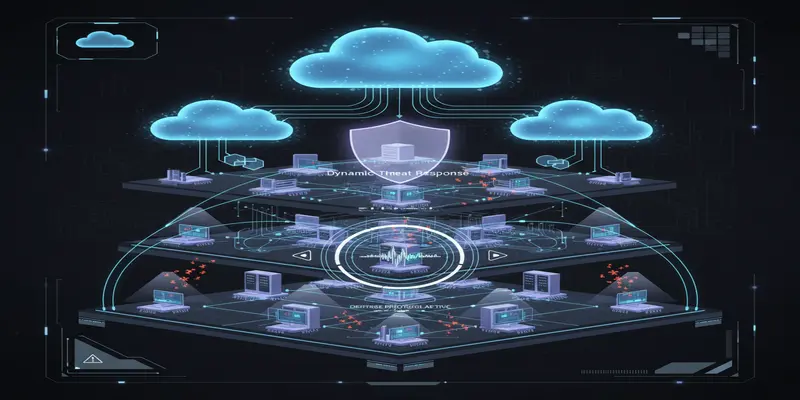

Microsoft unveils a new AI agent framework aimed at transforming enterprise cybersecurity posture. CISOs must reexamine operational defense strategies now.

A newly disclosed exploit known as Reprompt leverages Copilot session hijacking to inject attacker-controlled prompts via URLs. CISOs should assess Copilot exposure risks now.

Tool poisoning is a rising threat that targets AI agents through manipulated tool descriptions. CISOs must act decisively to safeguard agent integrity and data.

From agentic browsers to AI-powered toys, 2025 revealed dangerous oversights in AI security. CISOs must reassess integration risks before 2026.

CrowdStrike’s Falcon AIDR introduces a groundbreaking approach to securing AI interaction layers from emerging threats like prompt injection and agent hijacking.